Image Courtesy : https://blog.google/technology/ai/google-gemini-ai/#sundar-note

On 13th December 2023, GCP announced the Gemini API, which has been a hot topic of conversation!

Gemini is a family of generative AI models developed by Google DeepMind that is designed for multimodal use cases. The Gemini API gives you access to the Gemini Pro Vision and Gemini Pro models. A model that can analyze data from several modalities, such as text, video, and images, is called a multimodal model. You could, for instance, email the model a picture of a plate of cookies and ask it to provide you with the recipe.

Lets discuss about the Gemini Pro Vision model supports prompts that include text, code, images, and video, and can output text and code. To explore this model in the GCP console, select the gemini-pro-vision model card in the Model Garden. If you want learn more about how to write multimodal prompts check this link.

As per GCP’s documentation, Gemini Pro Vision excels at a wide variety of multimodal use cases such as Info Seeking, Object Recognition, Digital Content Understanding, Structured Content Generation, Captioning / Description, Extrapolations etc etc … There are a few limitations of that model as of now like not being able to extract any information beyond 2 minutes of the video and struggling with precise object/text localization in images.

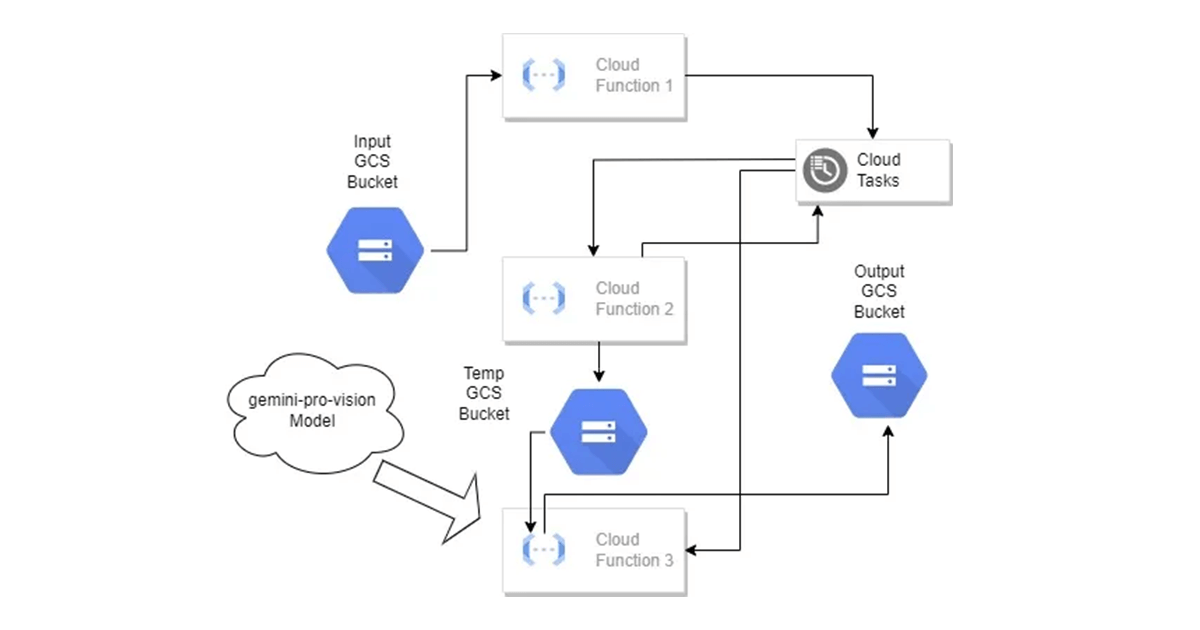

Lets discuss the pipeline created using GCP components like Cloud Functions, GCS buckets, Cloud tasks and last but not the least GCP’s Generative AI model i.e. Vertex AI’s Gemini API.

The pipeline starts with an input Google Cloud Storage bucket.

Cloud Function 1 triggers when an image or video is added to the bucket. The Cloud Function 1 then creates a Cloud Task which triggers Cloud Function 2.

Cloud Function 2 will read the image or video from input Google Cloud Storage bucket and encodes the data using convert_video_to_base64 or convert_image_to_base64 methods from base64 module in python. The Cloud Function 2 writes the output to a temporary GCS bucket and then creates a Cloud Task which triggers Cloud Function 3.

Cloud Function 3 will read the base64 encoded data from the temporary GCS bucket and will feed the data to the Gemini-Pro Vision model to process the data according to various prompt requirements that we will discuss below and writes the final output returned by the generative model to an output GCS bucket. For processing Images and Videos we need to use different Gemini-Pro Vision models which use appropriate prompts created as per the use case need.

Now you might wonder about couple of things we discussed above.

First of all, what is the need to use base64 encoding and decoding in the pipeline. You can read that here.

Also, why are we writing the data generated from Cloud Function 2 on a temp GCS bucket instead of directly sending the data across with the Cloud Task request payload that triggers Cloud Function 3. Reason to add that extra step is, Cloud Tasks have a Maximum task size limit of 1 MB that can be added to a queue. And the encoded data from an Image or Video can be bigger than that. So instead of sending actual encoded data across, we store the encoded data on a temp GCS bucket and pass the location of that object to Cloud Function 3 in the request payload that we created from Cloud Task created in Cloud Function 2.

Lets discuss some of the simple use cases that we can create using the capabilities of Gemini Pro Vision model.

1. Creating an Advertisement from a video

Input video Courtesy to Pexels:

Putting Ice On A Glass Of Coke Free Stock Video Footage, Royalty-Free 4K & HD Video Clip (pexels.com)

Prompt used to process the video: “Please provide ad for the video and some details about the target audience, and any key messages benefits.”

Response from the model: “**Coca-Cola: The Pause That Refreshes**

**Target audience:** This ad is targeted at young adults who are looking for a refreshing and delicious drink.

**Key messages:**

* Coca-Cola is the perfect drink to enjoy on a hot day.

* Coca-Cola is a great way to quench your thirst.

* Coca-Cola is a delicious and refreshing way to take a break.**Benefits:**

* Coca-Cola is a delicious and refreshing way to take a break.

* Coca-Cola is a great way to quench your thirst.

* Coca-Cola is the perfect drink to enjoy on a hot day.**Call to action:**

* Enjoy a Coca-Cola today!”

2. Writing creative story from an image

Prompt used to process the image: “Create fictional characters from the image and tell a motivational/good story.”

Response from the model: “The image shows three friends jumping in the air. They are all smiling and look happy. This image could be used to motivate people to get out and enjoy the winter weather. It could also be used to illustrate the importance of friendship and having fun.

Here is a fictional story about the three friends in the image:

The three friends had been planning this trip for months. They had all been looking forward to spending some time together and enjoying the winter weather. They had chosen to go to a nearby park that had a beautiful snow-covered trail.

When they arrived at the park, they were amazed at how beautiful it was. The snow was sparkling in the sunlight, and the trees were covered in a thick blanket of white. They decided to take a walk on the trail, and they soon found themselves surrounded by the beauty of nature.

As they walked, they talked and laughed. They shared stories about their lives and their hopes for the future. They talked about their dreams and their goals. They talked about the things they were grateful for and the things they wanted to change in the world.

They walked for hours, and they didn’t even notice the cold. They were too busy enjoying each other’s company and the beauty of the winter wonderland around them. When it was time to go, they were all sad to leave. But they knew that they would never forget this day. This was a day that they would cherish forever.

This story is a reminder that the best things in life are often the simplest. Spending time with friends, enjoying the beauty of nature, and sharing laughter and conversation are all things that make life worth living.”

3. Describing video content

Input video Courtesy to Pexels:

Video by Vlada Karpovich from Pexels: https://www.pexels.com/video/couple-preparing-food-in-the-kitchen-6998420/

Prompt used to process the video: “describe this video, sequence by sequence”

Response from the model: “ A man and a woman are cooking together in the kitchen. The woman is pouring milk from a bottle into a glass. The man is cracking eggs into a bowl.”

4. Video Question-Answer

Input video Courtesy to Pexels:

Video by Kelly from Pexels: https://www.pexels.com/video/group-of-boys-plays-soccer-in-a-soccer-field-2932301/

Prompt used to process the video: “What kind of activity are the individuals in the video engaged in? And describe this video, sequence by sequence.”

Response from the model: “The individuals in the video are engaged in a game of soccer.

Sequence 1: A player in a blue jersey kicks the ball towards the goal.

Sequence 2: A player in a red jersey intercepts the ball and dribbles it past the blue player.

Sequence 3: The red player passes the ball to a player in a white jersey.

Sequence 4: The white player shoots the ball into the goal.”

In conclusion, by implementing these foundational pipelines on Google Cloud Platform, we’ve laid the groundwork for unleashing the full potential of the generative AI models. May your pipelines be efficient, your models be creative, and your journey into the realm of Generative AI be both rewarding and innovative. Thank you for reading this article. Your time is appreciated. Until next time, stay curious !!

Indeed, you correctly guessed it! To add a dash of irony, a Generetive AI model was used to construct the blog’s conclusion paragraph above 🙂